TipText: Eyes-Free Text Entry on a Fingertip Keyboard

Zheer Xu*, Pui Chung Wong*, Jun Gong, Te-Yen Wu, Aditya Shekhar Nittala, Xiaojun Bi, Jürgen Steinle, Hongbo Fu, Kening Zhu, Xing-Dong Yang (* co-primary authors) ACM Symposium on User Interface Software and Technology (UIST), 2019. Best Paper Award [PDF] [Video]

Motivation

As computing devices are being tightly integrated into our daily living and working environments, users often require easy-to-carry and always-available input devices to interact with them in subtle manners. One-handed micro thumb-tip gestures offer new opportunities for such fast, subtle, and always-available interactions especially on devices with limited input space (e.g., wearables). Very much like gesturing on a trackpad, using the thumb-tip to interact with the virtual world through the index finger is a natural method to perform input. This has become increasingly practical with the rapid advances in sensing technologies, especially in epidermal devices and interactive skin technologies. While many mobile information tasks (e.g., dialing numbers) can be handled using micro thumb-tip gestures, text entry is overlooked, despite that text entry comprises of approximately 40% of mobile activity.

Design Consideration

-

Learnability. We considered three types of learnability: learnability of input technique, learnability of keyboard layout, and learnability of eyes-free text entry.

-

Eyes-Free Input. We considered two types of eyes-free conditions: typing without looking at the finger movement and typing without looking at the keyboard.

-

Accuracy. We considered two types of accuracy: accuracy of input technique and accuracy of text entry method.

-

Efficiency. The efficiency of a letter-based text entry method is mostly related to word disambiguation.

With the consideration of these factors, we designed our thumb-tip text entry technique. It comprises a miniature QWERTY keyboard that resides invisibly on the first segment (e.g. distal phalanx) of the index finger. When typing in an eyes-free context, a user selects each key based on his/her natural spatial awareness of the location of the desired key. The system searches in a dictionary for words corresponding to the sequence of the selected keys and provides a list of candidate words ordered by probability calculated using a statistical decoder.

Sensing Hardware

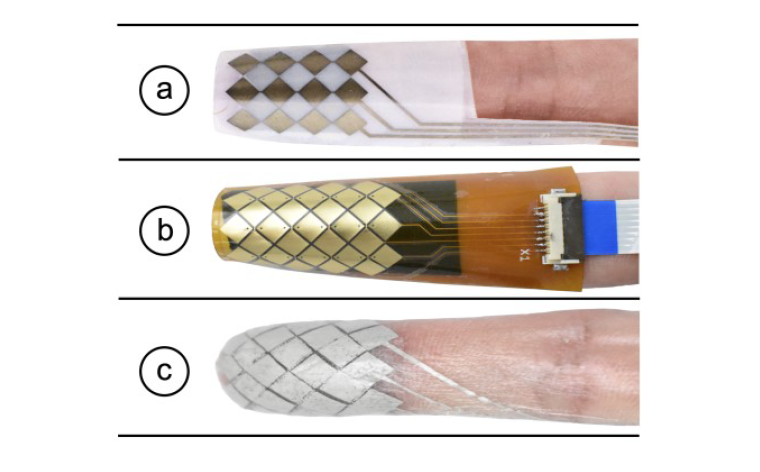

Our sensor development went through an iterative approach. We first developed a prototype using conductive inkjet printing on PET film using a Canon IP100 desktop ink-jet printer filled with conductive silver nanoparticle ink (Mitsubishi NBSIJ–MU01). Once the design was tested and its principled functionality on the finger pad confirmed, we created a second prototype with a flexible printed circuit (FPC), which gave us more reliable reading on sensor data. It is 0.025 – 0.125 mm thick and 21.5mm × 27mm wide. Finally, we developed a highly conformal version on temporary tattoo paper (~30-50 μm thick). We screen printed conductive traces using silver ink (Gwent C2130809D5) overlaid with PEDOT: PSS (Gwent C2100629D1). A layer of resin binder (Gwent R2070613P2) was printed between the electrode layers to isolate them from each other. Two layers of temporary tattoos were added to insulate the sensor from the skin.

Figure. (a) first prototype with PET film; (b) second prototype with FPC; (c) third prototype on temporary tattoo paper.

Selected Press Coverage

Arduino: TipText enables one-handed text entry using a fingertip keyboard

EurekAlert: Dartmouth lab introduces the next wave of interactive technology