Instrumenting and Analyzing Fabrication Activities, Users, and Expertise

Jun Gong, Fraser Anderson, George Fitzmaurice, Tovi Grossman ACM Conference on Human Factors in Computing Systems (CHI), 2019 [PDF] [Video]

Motivation

Nowadays, fabrication and making activities are getting more and more popular within research as well as the general population. A wider range of individuals with varying abilities, requirements and skills are coming to the fabrication space to work on their own projects. However, the tools and environments used in this making process do not sense or adapt to the fabrication context. So, we envision a monitored, reactive and adaptive fabrication space that can provide personalized information, feedback and assistance to users while they are using the space. A good example would be providing customized tutorials to the users in different expertise levels. To achieve the goal, we explore the sensorization of making and fabrication activities, where the environment, tools, and users were considered to be separate entities that could be instrumented for data collection. From the collected data, we are interested in three kinds of contextual information: Which users are performing the activities, Which activities are being performed and what expertise the users have.

Sensing System

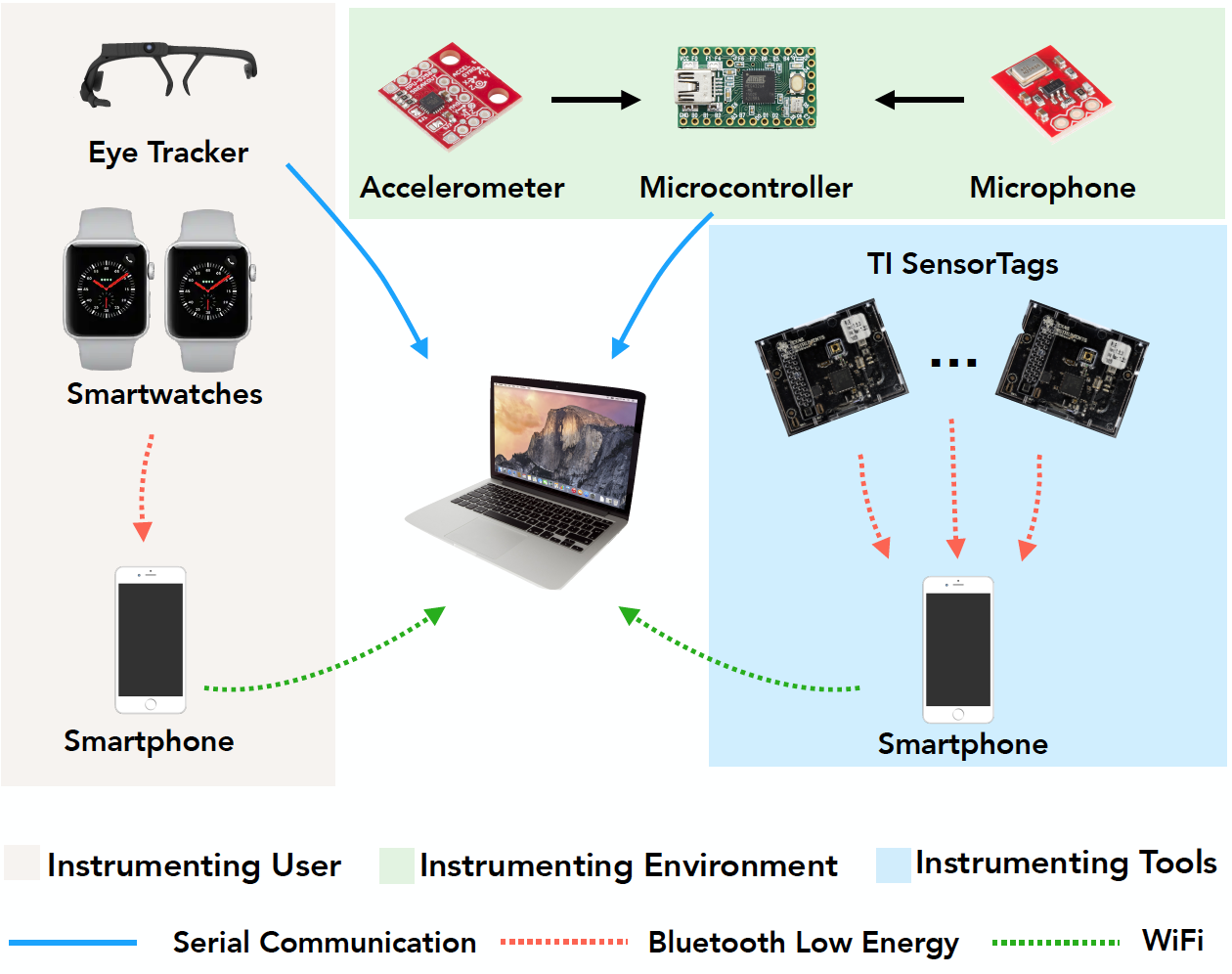

As fabrication activities are conducted by an individual, using a tool, within an environment, we explore placing different types of sensors at these three entities.

For instrumenting the environment, an accelerometer and a microphone were used. The high-frequency accelerometer can capture vibration signatures while different users were performing different physical tasks. And the audio data can also effectively infer what machinery or appliances are being used in the space. Here is the customized PCB where the accelerometer and microphone were soldered on. The sensors were sampled by a Teensy micro controller, transmitting the data to the laptop through serial.

For instrumenting the tools, we used a sensorTag from the Texas Instruments since it contains a wide variety of sensors and its compact size and the wireless nature would have minimal effect on user’s operation. This small, wireless sensor package contains a 9-axis IMU, as well as ambient light, humidity, temperature, pressure, and magnetic sensor. For our purposes, we only used 9-axis IMU including Accelerometer, Gyrometer and Magnetometer to track the tool‘s orientation and vibration, which we believe could be good indicators of user’s expertise level. An ambient light sensor was also used for a similar purpose. The sensor tag connected to an iphone through bluetooth low energy, transmitting all the data back to the laptop through WiFi.

For instrumenting the users, we used off-the-shelf wearable devices to capture users’ data. Each user was instrumented with an eye tracker which recorded the gaze position. For the gaze position data, we are interested in whether the user is looking at a fixed point while performing the task. So, we calculate the number of saccades within a time window. Each person was also outfitted with two Apple watches – one worn on each wrist. The watches recorded the movement of hands via accelerometers and gyrometers. The heart rate was also recorded, which may indicate user’s confidence while performing the tasks. The smartwatches were each connected to an iPhone, running an application which reads the data from the sensor and relays that to the laptop through WiFi.

Representative Physical Tasks

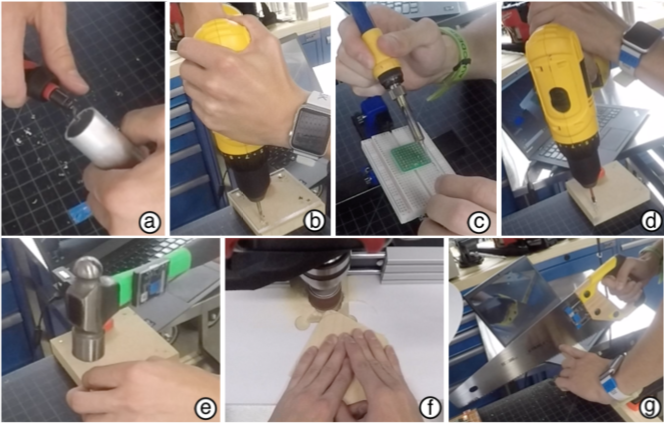

Each participant was asked to performed 7 representative physical tasks.

(a) Use the deburring tool to clean the inside edge of a roughly-cut aluminum pipe.

(b) Drill a hole through a piece of acrylic.

(c) Solder separate male headers on a general-purpose PCB

(d) Use a hand drill to screw into a piece of wood

(e) Hammer a nail into a piece of wood.

(f) Sand a piece of wood to the marked contour with the spindle-sander and drill press.

(g) Use the hand saw to make a straight and clean cut at a piece of wood.

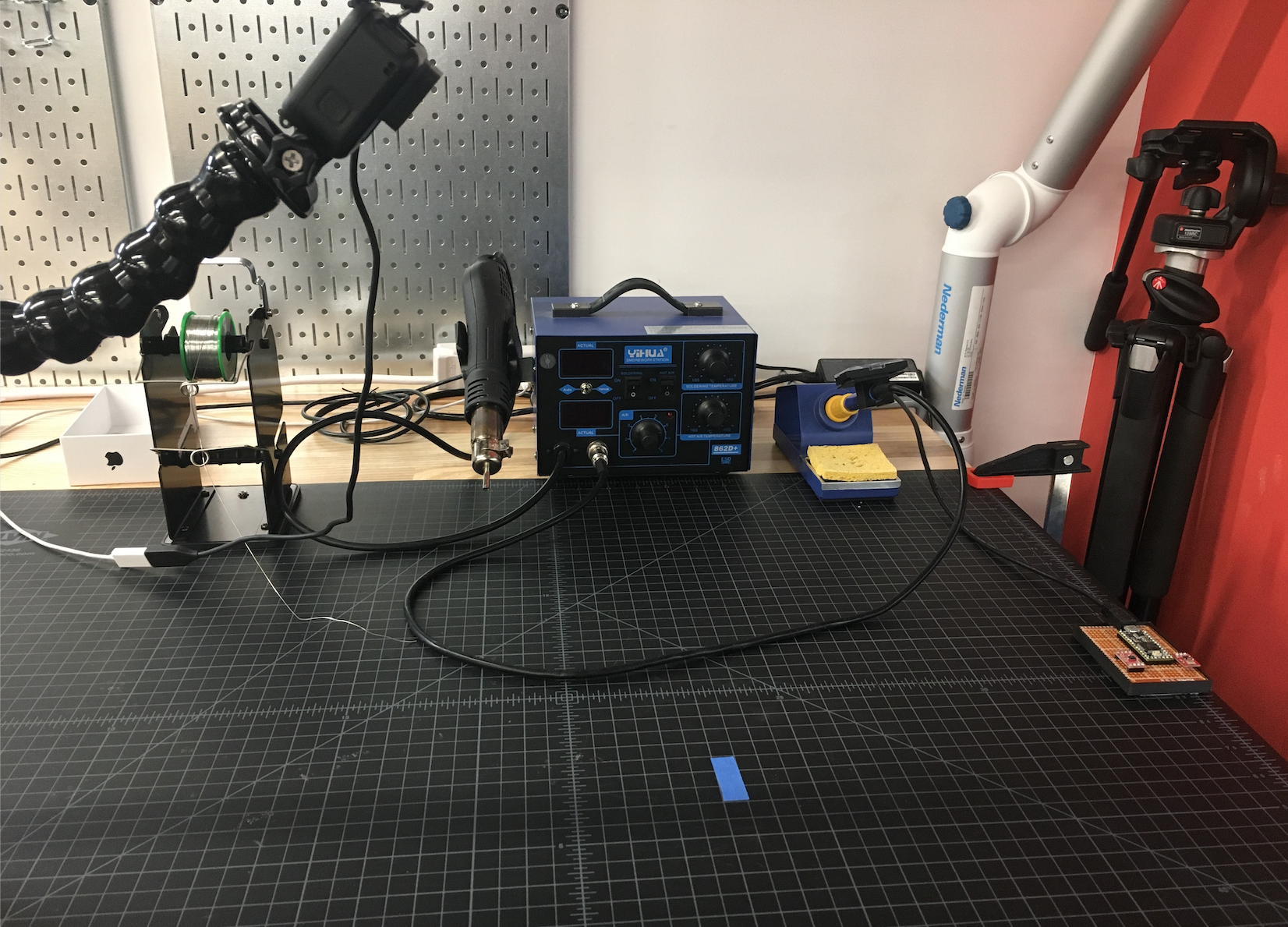

Workbench where participants were performing the tasks:

Material I prepared for the participants: